Meta Terminates Contract With Data Annotation Firm Sama

- 2017–2023: A quiet backbone of Meta’s AI

- September 2023: Smart glasses go mainstream

- February 2024: Contractors say the filter fails

- February–April 2024: From whistleblowing to termination

- The privacy problem no one wants to own

- Who controls ‘your’ data when your glasses see everything?

- Aftermath: Jobs lost, questions multiplying

Meta Terminates Contract With Data Annotation Firm Sama Human Human coverage depicts Meta’s termination of Sama’s contract as a fallout from contractors being exposed to highly intimate Ray-Ban Meta footage and a symptom of Meta’s broader privacy and outsourcing problems. It highlights the 1,108 Kenyan job losses, questions Meta’s transparency about human review of smart-glasses data, and underscores growing regulatory scrutiny in the UK and Kenya. @Arstechnica @AI magazine Meta’s latest AI drama isn’t about a rogue chatbot or a bad content filter. It’s about 1,108 people in Nairobi suddenly out of work—and what happens when your smart glasses may be streaming someone’s most intimate moments into a labeling queue half a world away.

2017–2023: A quiet backbone of Meta’s AI

Long before the headlines, Sama was one of the invisible engines behind Meta’s AI. The Kenya‑headquartered firm had been annotating data for Meta since 2017, handling video, image, and speech tasks across products, including the new Ray‑Ban Meta smart glasses.12

For years, this relationship flew under the radar—one more link in Big Tech’s global outsourcing chain. Sama insists that throughout the partnership it “consistently met the operational, security and quality standards required across all of our client engagements, including with Meta,” adding that it stands “firmly behind the quality and integrity of our work.”2

September 2023: Smart glasses go mainstream

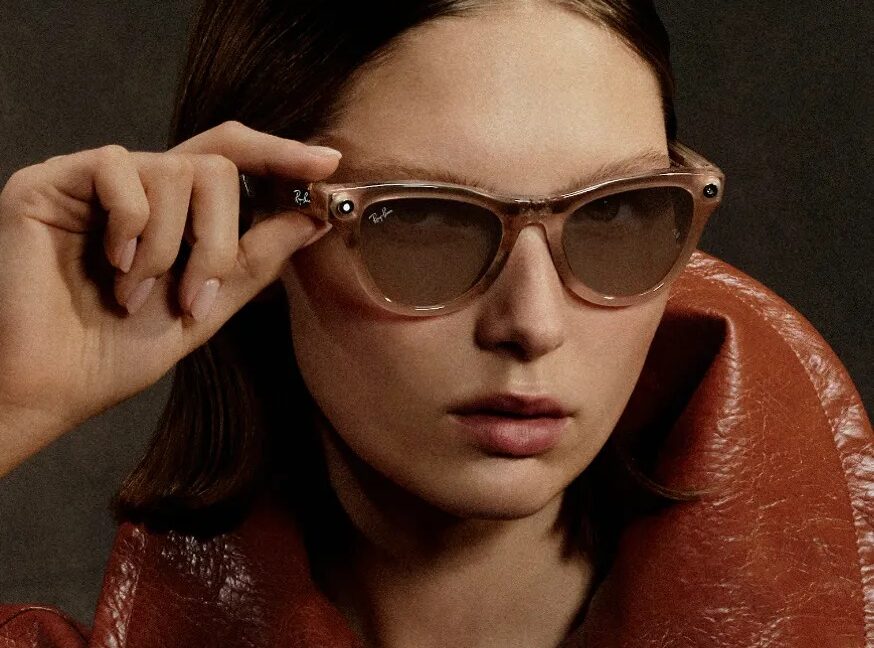

In September 2023, Meta unveiled its AI‑enabled Ray‑Ban smart glasses, pitching them as a seamless way to capture life’s moments and query Meta AI from your face.2 Like every AI product, they needed data—real interactions, real environments, real people.

Behind the sleek frames was a familiar process: human‑in‑the‑loop training. According to reporting on the Sama workflows, contractors reviewed transcripts of user interactions with Meta’s AI to check whether responses were accurate and safe, part of a broader loop “used to improve system performance.”2

Meta’s official line emphasized safeguards. Faces in the material prepared for review, the company said, are blurred.2 Media is supposed to stay on the user’s device unless users choose to share it with Meta or others; when they do, “we sometimes use contractors to review this data for the purpose of improving people’s experience, as many other companies do.”2

On paper, it sounds like privacy‑aware AI 101. In practice, the seams began to show.

February 2024: Contractors say the filter fails

In February, Swedish newspapers Svenska Dagbladet and Göteborgs‑Posten, along with Kenya‑based freelance journalist Naipanoi Lepapa, published accounts from Sama workers that detonated the façade of cleanly anonymized training data.12

The investigation reported that private footage from Meta’s smart glasses—“including people having sex or using the toilet”—sometimes ended up on Sama workers’ screens.2 One anonymous employee, quoted via machine translation, said they were “just expected to carry out the work” even when viewing what appeared to be deeply private scenes.1

Staff reports suggested the blurring and filtering that Meta touts can simply fail, particularly “in low light, such as in bedrooms or when the camera moves quickly.”2 In other words: the moments users are most likely to assume are private are exactly the ones most likely to slip through.

Meta’s own explanation to the BBC was blunt: “Subcontracted workers review content captured by the glasses to improve people’s experience with the glasses, as stated in our Privacy Policy.”2

So while the company frames this as routine data handling, the workers’ accounts paint a more disturbing picture: personal acts—changing clothes, having sex, using the toilet—being piped, with limited filtering, to outsourced reviewers in Nairobi.12

February–April 2024: From whistleblowing to termination

Once the whistleblowing hit European and African media, the clock started ticking.

Less than two months after the February reports surfaced, “Meta ended its contract with Sama,” according to BBC reporting summarized by Ars Technica.1 The partnership that began in 2017 was over.

Sama says the cancellation had an immediate and brutal impact: 1,108 workers in Nairobi were made redundant, with staff claiming they received just six days’ notice.12 Many of those workers were already part of a US$1.6 billion lawsuit against Meta over alleged mental‑health harms from prior content moderation work—a sign this workforce has long been on the front lines of the internet’s psychological fallout.2

Meta’s public justification? A spokesperson told the BBC that Meta “decided to end our work with Sama because they don’t meet our standards.”1 When Ars Technica asked how, specifically, Sama failed to meet those expectations, Meta did not provide details.1

Sama tells a different story. In a statement shared with both BBC and Ars, the company insisted it was “never notified of any failure to meet those standards,” reiterating that it had “consistently met the operational, security, and quality standards required across all of our client engagements” and that it stands “behind the integrity” of its work.12

Internally, according to BBC‑cited reporting, many Sama workers believe Meta pulled the plug because they spoke out about what they were seeing on‑screen—not because of any performance failures.1

The privacy problem no one wants to own

By late April 2024, the narrative had hardened along predictable fault lines.

On one side, Meta maintains this is a straightforward vendor‑quality issue. Contractors review content “as stated in our Privacy Policy,” faces are blurred, and the goal is simply to “improve people’s experience with the glasses,” like “many other companies do.”2 The decision to sack Sama is framed as enforcement of “our standards,” with little elaboration.1

On the other side, Sama and its workers suggest they became collateral damage for exposing a structural privacy flaw: a pipeline where sensitive, identifying footage is accessible to third‑party humans—despite Meta’s marketing posture around on‑device processing and user control.12

Sama’s official stance is cautious: “We do not comment on specific client processes or decisions,” it told Ars Technica, while stressing its focus on “supporting our employees during this transition while continuing to deliver for our clients.”1 But the message from rank‑and‑file workers, via journalists, is less diplomatic: they were “just expected to carry out the work” even when reviewing what looked like private, intimate content.1

Regulators have taken notice. The revelations have “prompted investigations and scrutiny from data protection authorities in the UK and Kenya,” according to Ars Technica’s summary of the fallout.1 For regulators already wary of always‑on cameras and opaque AI training regimes, Meta’s Ray‑Ban pipeline is looking less like innovation and more like a case study in consent failure.

Who controls ‘your’ data when your glasses see everything?

The core tension is simple: Meta says content from the glasses only leaves a device when users choose to share it, and even then is handled with safeguards.2 Contractors say they’re still seeing people in their most vulnerable states.

Filtering clearly isn’t perfect; workers describe technical edge cases—low light, fast movement—where faces or bodies aren’t properly obscured.2 And even when technical measures work, the premise remains unsettling: “subcontracted workers review content captured by the glasses” as a matter of course.2

The Kenyan workforce sits at the intersection of global inequality and data‑hungry AI. These are relatively low‑paid contractors handling high‑risk material for one of the world’s richest tech companies, now cut loose with days’ notice after sounding alarms.

Aftermath: Jobs lost, questions multiplying

By early May 2024, the immediate damage was clear: over a thousand people in Nairobi without jobs, a seven‑year contract abruptly ended, and Meta under renewed scrutiny for how it trains its AI on material captured by always‑on cameras.12

Meta’s broader smart‑glasses push continues, and the company insists it is aligned with its own standards and privacy policy.2 Sama, for its part, is trying to stabilize operations and assure other clients that it remains a safe pair of hands.12

But the deeper issues won’t be solved by swapping one annotator for another. If human review is baked into how AI products learn—and for now, it is—then someone, somewhere, is watching. The real question regulators, users, and workers now have to confront is whether Meta’s model of outsourced, opaque, human‑in‑the‑loop surveillance is compatible with the kind of privacy it keeps promising.

1. Meta cuts contractors who reported seeing Ray-Ban Meta users have sex — Reporting on Sama workers viewing sensitive Ray‑Ban Meta footage, Meta’s claim Sama “didn’t meet our standards,” and resulting job losses and regulatory scrutiny.

2. Data Privacy: Why Meta Called it Quits with Sama — Coverage of Meta’s termination of its seven‑year training‑data deal with Sama, worker accounts of viewing intimate footage, and Meta’s explanation of its human review process.

Story coverage

Write a comment