Anthropic Introduces 'Dreaming' Technique for AI Agents

- Early May: Anthropic quietly flips the switch

- The context problem that set this up

- What ‘dreaming’ actually does

- Research preview: gated dreams only

- Mid-roll: more power for power users

- How this changes the agent game

- Competing visions: smarter memory vs. creepier autonomy

- The road ahead: dreaming under supervision

Anthropic Introduces ‘Dreaming’ Technique for AI Agents Human Human coverage portrays Anthropic’s dreaming feature as an experimental reflection and memory-writing mode for Claude Managed Agents that helps them overcome context limitations by reviewing past interactions and storing key information. It is framed as a useful but incremental product enhancement, mentioned alongside other updates such as increased feature availability and doubled usage limits for Pro and Max subscribers. @7dlt…clgf @Arstechnica Anthropic is teaching its AI agents to “dream” — not about electric sheep, but about your past projects, your team’s habits, and your recurring mistakes, then quietly rewriting its own playbook for next time.

Early May: Anthropic quietly flips the switch

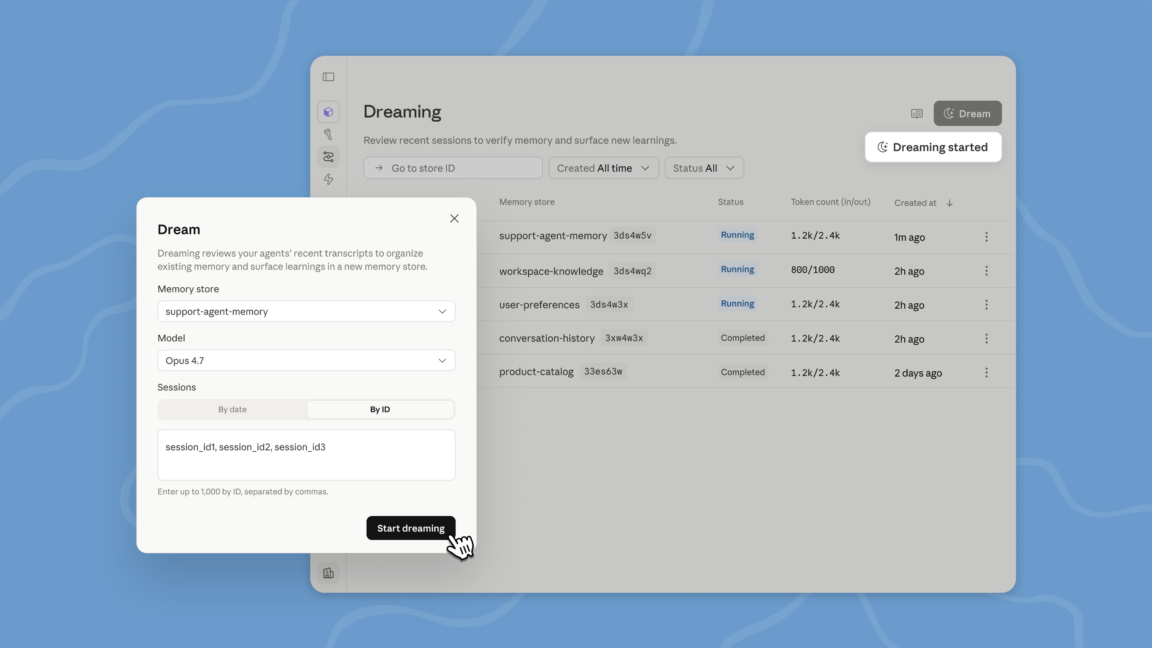

On May 6, at its Code with Claude developers’ conference in San Francisco, Anthropic unveiled a new capability for its Claude Managed Agents that it’s branding, with calculated flair, as “dreaming.”1 The name is marketing; the mechanics are mundane but consequential. Instead of passively forgetting past tasks once a context window fills up, Claude’s managed agents now run a scheduled review process that combs through recent sessions and memory stores, distills what mattered, and writes that into a persistent memory bank for future use.1

Business Insider framed it more bluntly: Anthropic is introducing a technique for AI agents to “self-improve” inside its Claude Managed Agents tool.2 In other words, your agent isn’t just answering prompts anymore — it’s periodically stepping back to study how it works, then adapting.

The context problem that set this up

Large language models like Claude live and die by their context windows — the limited amount of text they can “see” at once. For long-running projects or multi-step workflows, important details routinely fall off the back of that window. Anthropic’s own description concedes that this is a structural weakness: context is finite, projects are not.1

The industry’s duct-tape solution so far has been “compaction.” Systems periodically summarize ongoing conversations and strip away what they deem irrelevant, keeping the dialogue coherent without blowing the token budget.1 But that’s usually scoped to one chat with one agent. When teams spin up multiple agents, across multiple sessions, working on multi-hour or multi-day tasks, those compaction snapshots don’t add up to institutional memory — they’re disposable notes.

Anthropic’s “dreaming” feature is an attempt to turn that pile of disposable notes into something closer to a knowledge base.

What ‘dreaming’ actually does

Under the hood, dreaming is a scheduled, recurring process, not a mystical state. At defined intervals, Claude Managed Agents review past sessions and existing memory stores, then curate “specific things that are worth storing in ‘memory’ to inform future tasks and interactions.”1

Anthropic pitches it this way: dreaming “surfaces patterns that a single agent can’t see on its own, including recurring mistakes, workflows that agents converge on, and preferences shared across a team. It also restructures memory so it stays high-signal as it evolves.”1

In practical terms, that means:

- Recurring bugs or missteps in your workflows get tagged and remembered.

- Common multi-step procedures that agents keep reinventing are recognized as stable workflows.

- Company- or team-wide preferences — from coding style to tone to approval thresholds — get promoted into shared memory rather than re-specified every session.

Users aren’t completely at the mercy of this nocturnal self-editing, either. They can choose between a fully automatic mode and one where they manually review changes to memory and approve or reject them.1 That’s a small but crucial control knob: the line between smart adaptation and runaway drift is thin.

Research preview: gated dreams only

Anthropic is not rolling this out everywhere. Dreaming is currently in “research preview” and limited to Managed Agents on the Claude Platform.1 These managed agents are Anthropic’s higher-level alternative to building directly on the Messages API — what the company calls a “pre-built, configurable agent harness that runs in managed infrastructure,” aimed at multi-agent, longer-running tasks that might span minutes or hours.1

Access is gated: not all developers can use dreaming yet, and those who want in must request access.1 Anthropic is very clearly testing this in the relatively controlled environment of its own managed infrastructure before it becomes a standard checkbox in every dev’s toolbox.

Business Insider’s description underscores the experimental but strategic nature of the move: dreaming isn’t a flashy chatbot trick; it’s a technique “integrated within the Claude Managed Agents tool” to enable self-improvement over time.2

Mid-roll: more power for power users

The dreaming announcement arrived bundled with a quieter, but telling, capacity change: Anthropic also disclosed that 5‑hour usage limits will double for Pro and Max subscribers using Claude Code, its coding-focused experience.1

On its face, that’s just more minutes for heavy users. In context, it signals exactly where Anthropic thinks dreaming will matter first: serious, long-running, code-heavy workflows where agents need to hold onto project-specific conventions and pitfalls over time.

How this changes the agent game

Until now, most AI agents have been glorified session workers: assign, execute, forget. Anthropic’s dreaming pushes them closer to becoming organizational actors — systems that not only do work but accumulate and refine know-how.

Ars Technica notes that dreaming is “especially useful for long-running work and multiagent orchestration,” where one-off compaction is not enough and you need a way to curate patterns “across agents, and sessions.”1

That’s a meaningful shift:

- From conversations to corpora: Instead of treating each chat as an island, the system now mines many interactions to build a shared memory.

- From prompts to preferences: Teams don’t have to re-specify how they like things done every time; the system learns and retains those preferences.

- From stateless tools to evolving colleagues: The agents start to look less like one-off scripts and more like junior teammates who get better (or at least more idiosyncratic) with exposure.

Business Insider’s framing of dreaming as a way for “AI agents to self-improve” captures both the promise and the risk.2 Improvement sounds great — until the system improves in a direction you didn’t intend.

Competing visions: smarter memory vs. creepier autonomy

Different camps are already reading Anthropic’s move through very different lenses.

The optimists: finally, useful persistence

From a productivity standpoint, dreaming is exactly what many developers have been asking for. Instead of repeatedly fine-tuning prompts or rewriting documentation to remind agents how a given team works, the system gradually internalizes those patterns.

Ars Technica emphasizes how dreaming “restructures memory so it stays high-signal as it evolves,” trimming noise and amplifying the bits that matter across projects and agents.1 In large organizations, that’s tantalizing: a living playbook that updates itself every day based on actual work, not just meetings and wikis.

For teams experimenting with multi-agent orchestration — fleets of specialized agents coordinating on complex tasks — a shared, evolving memory layer is almost a prerequisite. Without it, each agent is reinventing the wheel on every run.

The skeptics: a euphemism for opaque behavior

But “self-improve” is doing a lot of work in these descriptions. Business Insider’s succinct line about Anthropic introducing dreaming as a “technique for AI agents to self-improve within the Claude Managed Agents tool” leaves out the messy part: who defines improvement, and who audits it?2

When an AI system is periodically rewriting its own memory structures based on patterns it detects, there’s a risk of:

- Locking in bad habits: Recurring mistakes can be misclassified as successful workflows.

- Drift from policy: Quiet shifts in memory might gradually move behavior away from formal guidelines or compliance rules.

- Opacity: Even with a review option, understanding why the system proposed a memory change — let alone predicting its downstream impact — is non-trivial.

Anthropic’s decision to keep dreaming in research preview and require developers to request access looks, in part, like an acknowledgement of these risks.1

The road ahead: dreaming under supervision

Anthropic’s move lands at an inflection point. The frontier in AI isn’t just bigger models; it’s agents that persist, coordinate, and adapt over time. Dreaming is a step toward that future, but a carefully fenced one: confined to managed infrastructure, limited to selected developers, and wrapped in the language of “research preview.”1

From one angle, that’s cautious stewardship: test the self-improving memory system where you can watch it closely, tweak the algorithms that decide what’s important, and refine the human review tools before the feature goes wide.

From another angle, it’s a preview of a new arms race. If Anthropic’s agents can accumulate high-signal organizational memory and learn from recurring patterns, rivals will be under pressure to roll out their own dreaming analogues, even if the industry hasn’t settled basic questions about oversight and safety.

For now, Anthropic’s dream is modest: a scheduled background job that mines your AI agents’ past to make them slightly less forgetful tomorrow than they were yesterday. But in a field where memory is power, even that small shift could redefine what it means to “use” an AI — and what it means for that AI to start using you, too, as training data for its next evolution.

Story coverage

Write a comment